Veteran's Lens

When the Watchdog Doesn't Bark

Silence in a monitoring system is indistinguishable from health, and the system is structured so that you only test the alert path during the exact event you are trying to detect.

Jason Walker

.5 min read

I had been watching a cron stack run for weeks expecting nothing. The crons fired on schedule, the logs grew, the systems they monitored stayed up. No alerts arrived. I read the silence as evidence everything was fine.

The silence was a lie. For two weeks the alert path itself was broken. Every cron that failed in that window produced no signal. A daily backtest job had been throwing PermissionError every night and nobody knew. A daily collector had been hitting a permission race and going through retry storms in its own log file, never reaching me. A weekly script had been zero-byte-logging since the moment it started failing. The watchdog had been asleep the whole time, and I had been treating its silence as a clean bill of health.

What broke was almost embarrassing. An environment file holding the bot token for my alert channel had picked up Windows line endings somewhere along the way. When the shell sourced the file via eval, the carriage return character at the end of the token line got appended to the variable. Every curl call after that built a URL with a stray carriage return in the middle of the path and the API rejected it as malformed. Curl exited zero because the request had been "sent." The wrapper script saw exit zero and assumed the alert went through. The wrapper had no eyes. Nothing checked whether the message arrived.

The lesson I keep coming back to is that monitoring discussions almost always focus on what to detect. Should we alert on this? At what threshold? What is the right severity? Almost no time is spent on whether the path that delivers the alert actually works. We treat the alert pipeline as infrastructure that is either there or not, and we assume it is there because it was set up once. That assumption ages badly. A bot token rotates. A webhook URL changes. A credential expires. A line ending sneaks in from a different operating system. Each of these breaks the path without breaking the producer. The producer keeps emitting signals into a hole. The receiving end stays quiet. And quiet looks the same as healthy.

You can run this thought experiment against any monitoring system you operate. If your SIEM stopped emailing alerts tomorrow, how long would it take you to notice? If the answer is "the next time something happens," the answer is also "you would not notice for as long as nothing happens, which is the only window during which you needed the alerts to work." A SOC with a broken email integration looks like a SOC with no incidents. A vulnerability scanner that fails to publish its weekly summary looks like a clean scan. A change management bot that silently drops half its messages looks like a quiet change window. The shared failure mode is that silence is indistinguishable from health, and the system is structured so that you only test the alert path during the exact event you are trying to detect.

The fix is to treat the alert path as a tested capability, not as background infrastructure. Test detection rules and test delivery on the same cadence. Inject a deliberate failure into each alert channel on a schedule that you can write into a runbook. Make the failure produce a known signature. Verify the signature lands where it is supposed to land, on the device or in the channel that the person on call actually checks. If the signature does not arrive, the path is broken even if the test script returned zero. The exit code lies. The receipt does not.

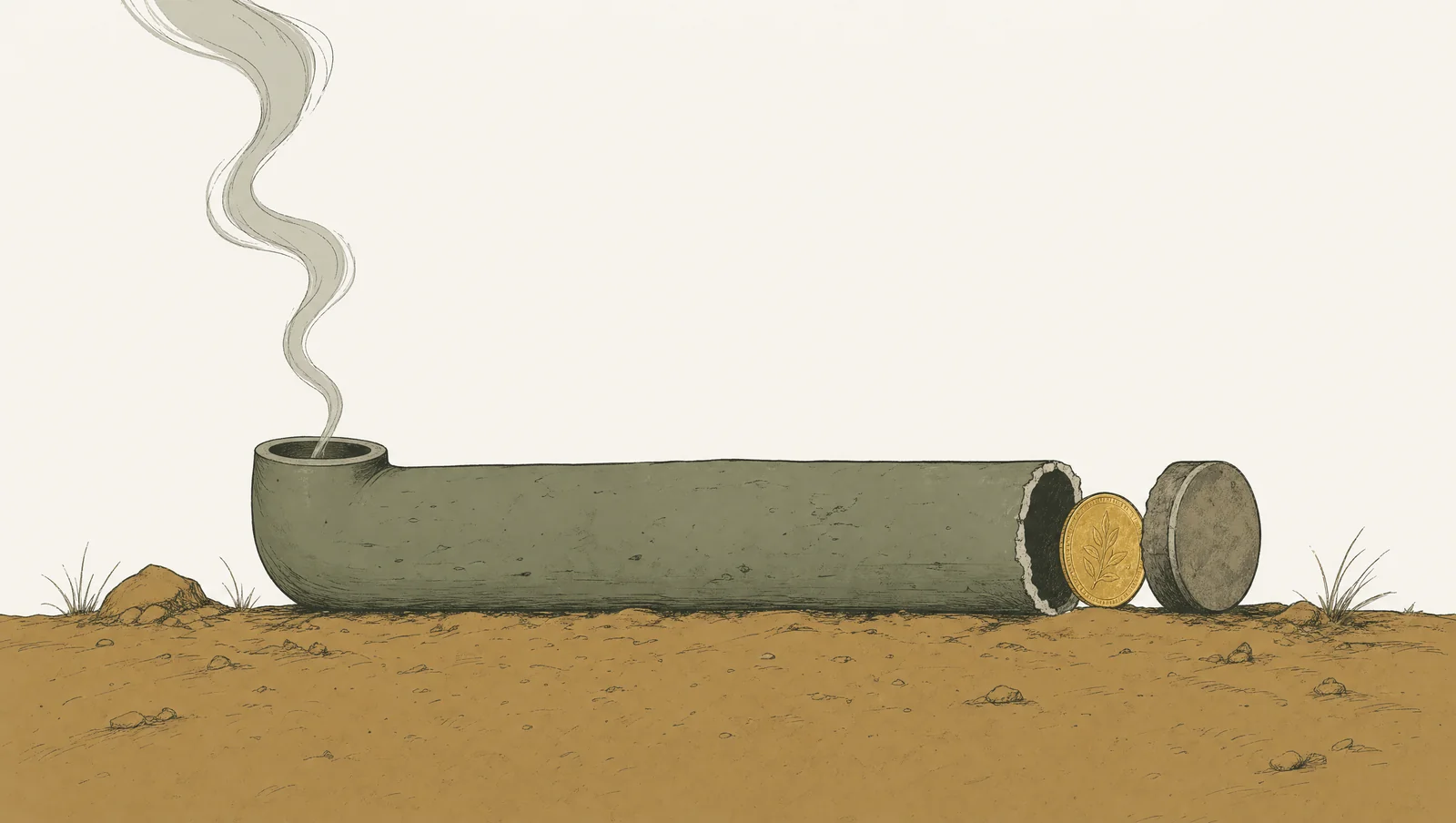

The same logic applies to anything that produces a downstream signal. Reports that "complete successfully" with no recipients. Webhooks that return 200 but never reach the consumer. Health endpoints that return 200 because they only check the load balancer is up, not the application behind it. The pattern is everywhere once you start looking for it. A system that emits a signal into a broken pipe is not monitoring you. It is performing monitoring at you. There is a difference, and the difference is invisible until something fails and the silence holds.

So if there is one place to spend an hour this week, spend it on this. Pick the three alert channels you most depend on. Force a known failure into each one. See whether the alert arrives. If it does, you have earned the right to trust silence as a signal. If it does not, your watchdog has been asleep for some unknown length of time and you do not yet know what got through during the window when nothing was barking.

Keep reading

Weekly writing from inside the work.

Practitioner-researcher essays four times a week. No spam, unsubscribe in one click.