Public-Sector CISO

AI Governance Is a Security Problem, Not a Policy Exercise

Most states treat AI governance as a compliance checkbox. Here's why that framing guarantees failure - and what the security-first approach looks like.

Jason Walker

.6 min read

Most state governments are doing AI governance backwards.

They stand up a council, write a policy, maybe run a pilot with guardrails, and call it governance. The press release goes out. The checkbox gets checked. And somewhere downstream, an agency quietly feeds a generative AI tool sensitive constituent data because nobody built the enforcement layer to stop them.

I watch this pattern from a seat that sees across dozens of agencies, hundreds of thousands of devices, and a threat surface that grows every time someone in a program office decides to "just try" a new AI tool. What states are calling governance is mostly documentation. Documentation is not governance. Governance is control.

Let me explain the distinction, because it matters enormously.

The Policy-Control Gap

UC Berkeley's recent report on AI governance found that the vast majority of states have established some form of AI governance. That sounds encouraging until you read what "some form" covers. In many cases it's an executive order, a council, and a list of best practices. That's a starting point. It's not a security posture.

The report is right that AI governance needs to be embedded in procurement, cybersecurity, and operational workflows rather than treated as a separate compliance process. That is exactly the right frame. But the reason that integration is hard isn't organizational inertia. It's that most agencies lack the technical controls to operationalize any policy they write. You can prohibit employees from entering sensitive data into AI systems. If you have no data loss prevention tooling, no API inspection, and no audit logging on those tools, the prohibition is a suggestion.

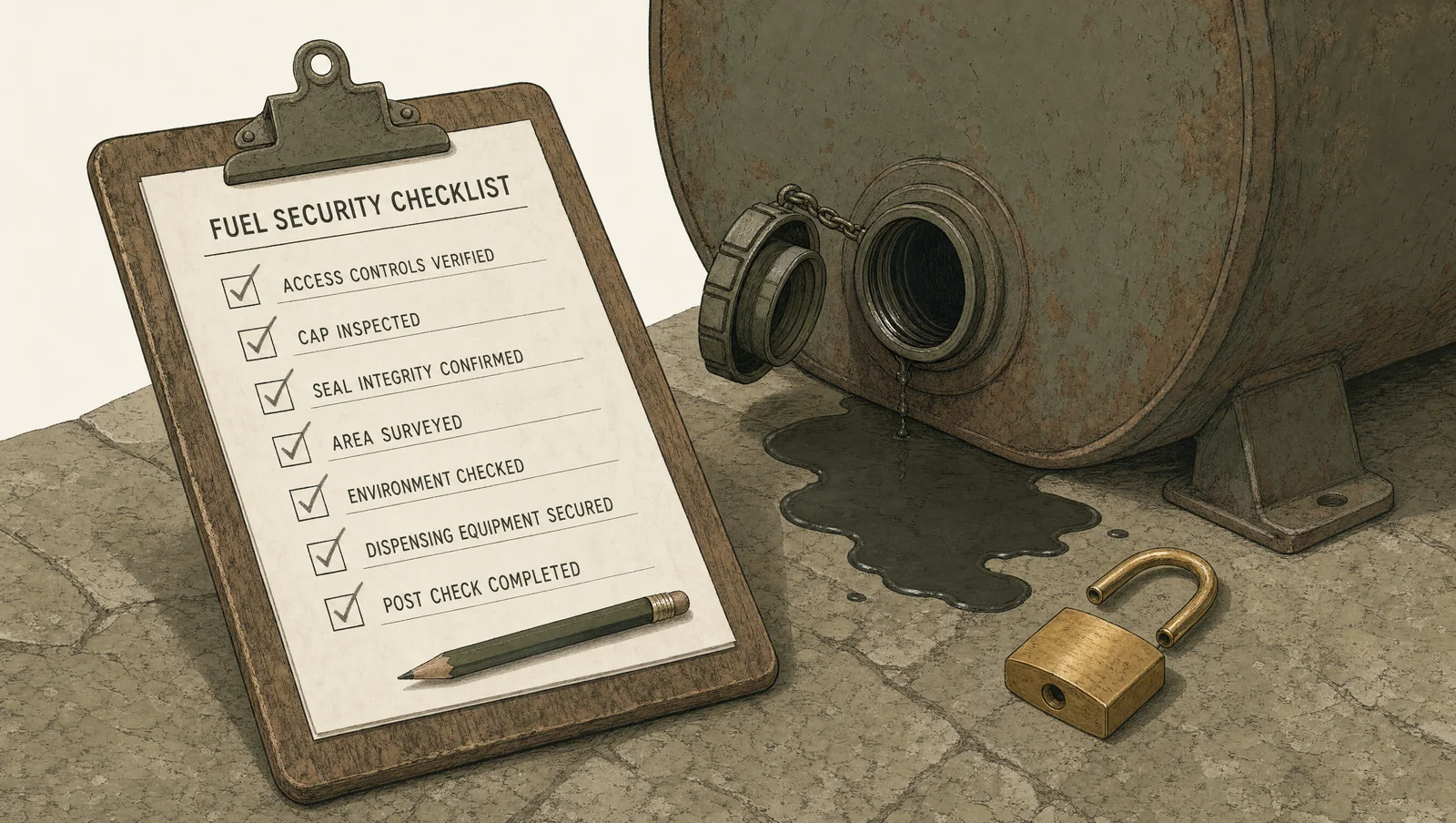

Aviation safety culture built its entire model on this truth: a checklist without a mechanism is theater. The pre-flight check works because it's tied to physical verification, not just attestation. You don't ask the pilot if the fuel caps are on. You look. Security governance for AI needs the same physicality. The control has to exist in the environment, not just in the document.

Where the Risk Actually Lives

Here's what most AI governance conversations miss: the attack surface isn't just the AI system. It's everything the AI system touches.

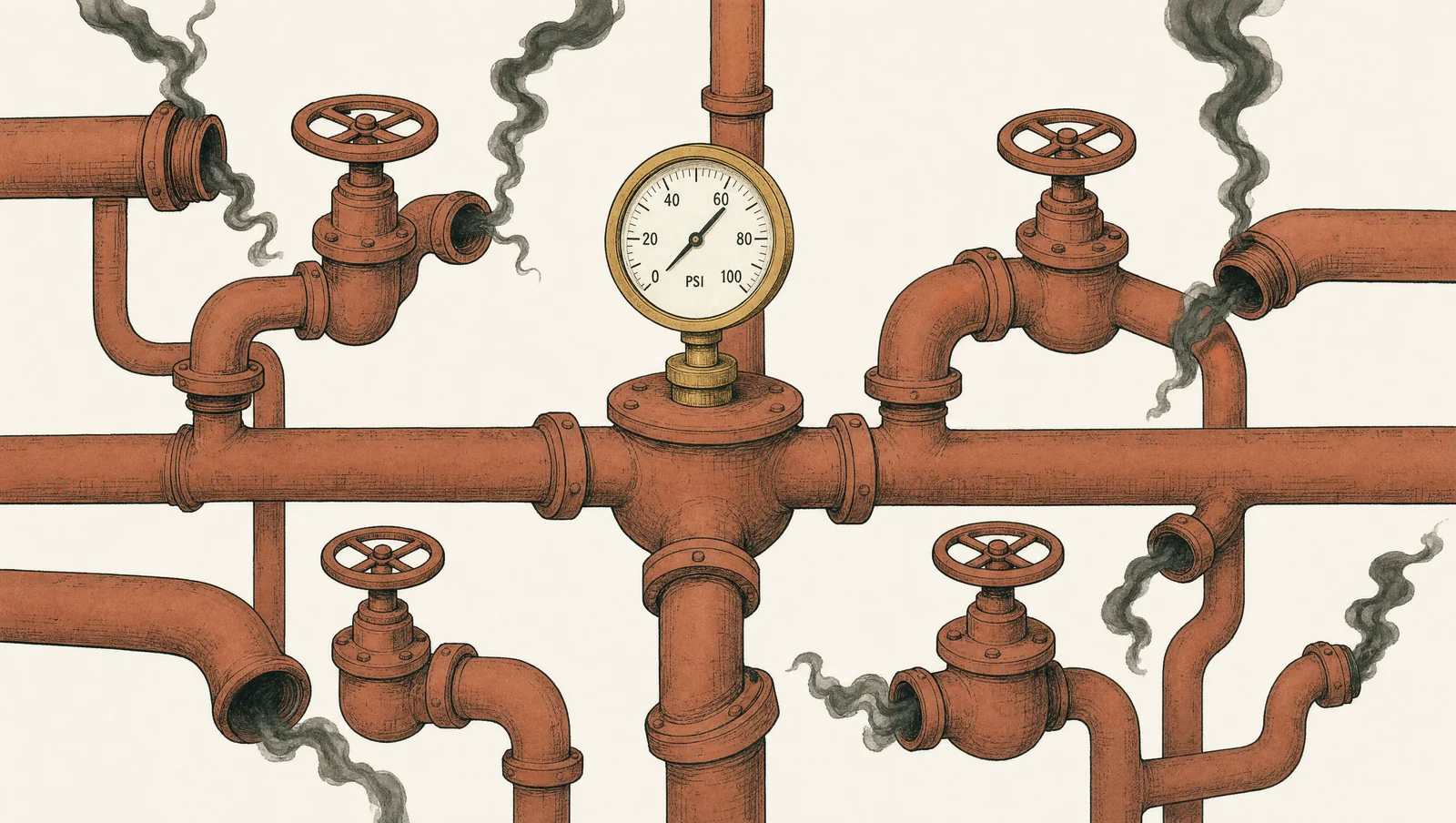

When an agency deploys a generative AI tool for document summarization or benefits processing or constituent communication, that tool ingests data. It often calls external APIs. It runs on infrastructure that may or may not be patched, logged, or monitored. The risk is not primarily that the AI hallucinates. The risk is that a poorly integrated AI tool becomes a new path into systems that were already underprotected.

The Berkeley report mentions AI-powered cyberattacks and attacks targeting AI systems as risks agencies need to assess. Both are real. But in state government, the more immediate threat is simpler and more embarrassing: an AI integration with overprivileged access that exposes data it never needed to touch. Adversaries love complexity. Every new tool with a poorly scoped service account is an invitation.

Running enterprise cybersecurity at the scale of a large state means I spend a lot of time thinking about blast radius. Not just "what could go wrong with this tool in isolation" but "if this goes wrong, how far does it reach, and how fast can we detect it?" Most agencies evaluating AI tools are not asking those questions. They're asking "does it work" and "does legal approve it."

What Security-First AI Governance Actually Requires

If I were building this from scratch, the sequence looks like this.

Before any AI tool goes live, it goes through the same security review as any other third-party system: vendor security assessment, data classification review, access control scoping, logging requirements. Not because AI is magic and scary, but because it's software with access to data, and that's always a security problem.

Procurement is where control is cheapest. You can require security provisions contractually before a tool is deployed far more easily than you can enforce them after. Procurement integration isn't just a governance best practice. It's leverage, and you should use it.

Human oversight requirements need to be operationalized, not just stated. "A human reviews AI outputs before action is taken" is a fine policy sentence. It means nothing without a workflow that makes the review mandatory, auditable, and time-stamped. The oversight layer has to be built into the process, not bolted on as an honor system.

Monitoring after deployment is where most frameworks fall apart. Agencies treat go-live as the finish line. In security, go-live is when the real work starts. AI systems drift. Their outputs change as models are updated by vendors. Their integrations accumulate technical debt. Continuous monitoring isn't a luxury. It's the only way to know if the system you approved six months ago still resembles the system running today.

The Accountability Problem

Here's the hardest part, the part most governance frameworks paper over: accountability.

When an AI system makes a bad decision that harms a constituent, who owns it? The agency head? The vendor? The program office that configured the tool? If the answer isn't clear before deployment, it won't get clearer after an incident. It'll just get political.

Real accountability structures name specific roles, specific approval authority, and specific consequences. They exist in statute or executive order with enough specificity to be enforced. "Clear accountability structures" as a recommendation is easy to write. Building them requires someone with enough organizational authority to make them stick, and enough political will to enforce them when a high-profile program wants an exception.

That's the job. Not writing the policy. Enforcing it when it's inconvenient.

What States Should Actually Do

Stop treating AI governance as a policy function that happens to touch security. Treat it as a security function that requires policy to operate. Put the CISO in the accountability chain for AI deployments, not as an optional reviewer, but as a required approver with authority to block deployments that fail security review.

Build the controls before you write the policies. If you can't enforce it technically, don't promise you can enforce it procedurally.

And accept that the frameworks built today will need to be rebuilt. AI capabilities are changing fast enough that any governance model with a three-year shelf life is already outdated before it's published. The goal isn't a finished framework. The goal is a program with the institutional muscle to adapt as the technology changes.

Documentation doesn't protect data. Controls do. Build the controls.

Keep reading

Weekly writing from inside the work.

Practitioner-researcher essays four times a week. No spam, unsubscribe in one click.